Airtable and n8n: a great fit

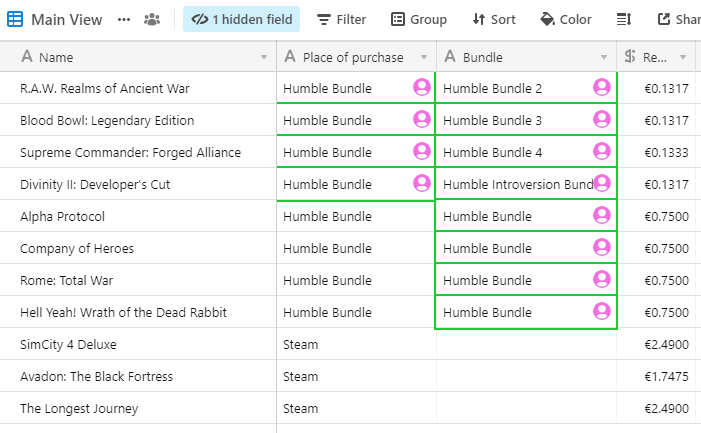

I use Airtable for a few of my databases, for example, a list of the books I have at home, or the games I've bought. Today, I've realized the data in one of the Games table are a bit of a mess and started cleaning them up. After doing this manually for a while, I'd realized it would take a long time, but I could easily script it away. In this post, I'll describe how I did it with n8n. But first:

A short intro to the tools

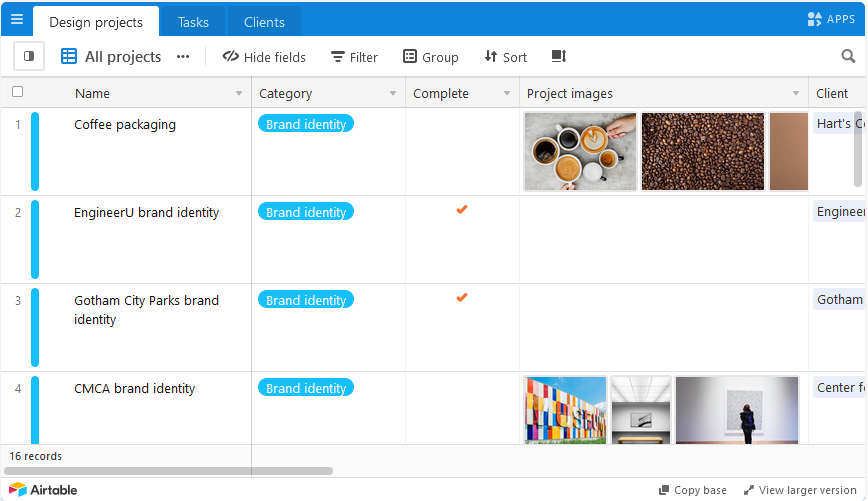

In case you don't know Airtable, it's basically a web-based spreadsheet database. It has a great UI, many user-friendly features, and lets you create links from one sheet (table) to another.

Airtable can do a lot of things with tons of extra features especially in the paid tiers - but they're priced way outside my range, especially considering I only use it for my hobbies (i.e. noncommercially) and haven't even hit the tiny data allowance of the free tier. That is to say - maybe there is a better way to do this just with Airtable Pro, and I just don't know about it.

n8n is an automation tool that lets you easily define data processing workflows. No programming required, but it does need some troubleshooting and experimentation at times, often because the documentation is sparse. It's like IFTTT or Zapier that you can host yourself. There are hundreds of integrations available and you can even roll your own.

I've installed n8n (or 'Natan' as I will be calling it from now on since I like it a lot more that way 😄) a while back out of curiosity and only have 1-2 active workflows there, but this Airtable thing seemed like a good fit. (I do have a lot of automation running already, but I use Huginn for that. I treat Huginn as my C&C and Natan as purely an ETL/integration tool.)

Without further ado, this is what I ended up using:

Fixing the data with a one-off workflow

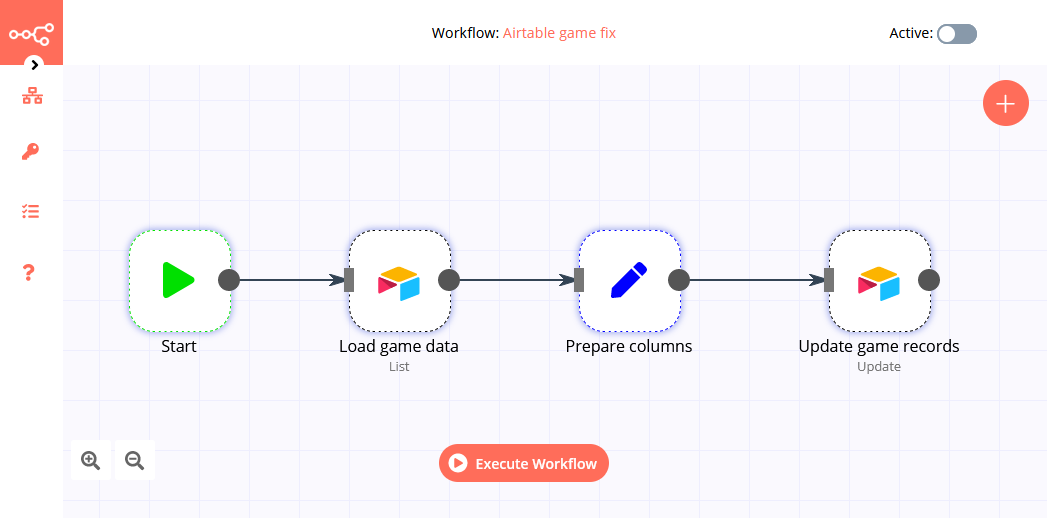

A fairly simple workflow that just loads the data from Airtable, prepares values as needed, and saves them back again. Thanks to Natan's built-in integration, I don't need to deal with things like authentication or paging.

Natan has a great design/test experience - it lets you run each node separately, see the results, then run the next one using the data from the previous node's test run. Or you can run the entire thing. Notice (in the top right corner) the workflow isn't even active. You only need to activate it if you want it to run on a schedule or respond to a webhook, and since I'm only going to run it a few times manually until my data are fixed, there's no need for that.

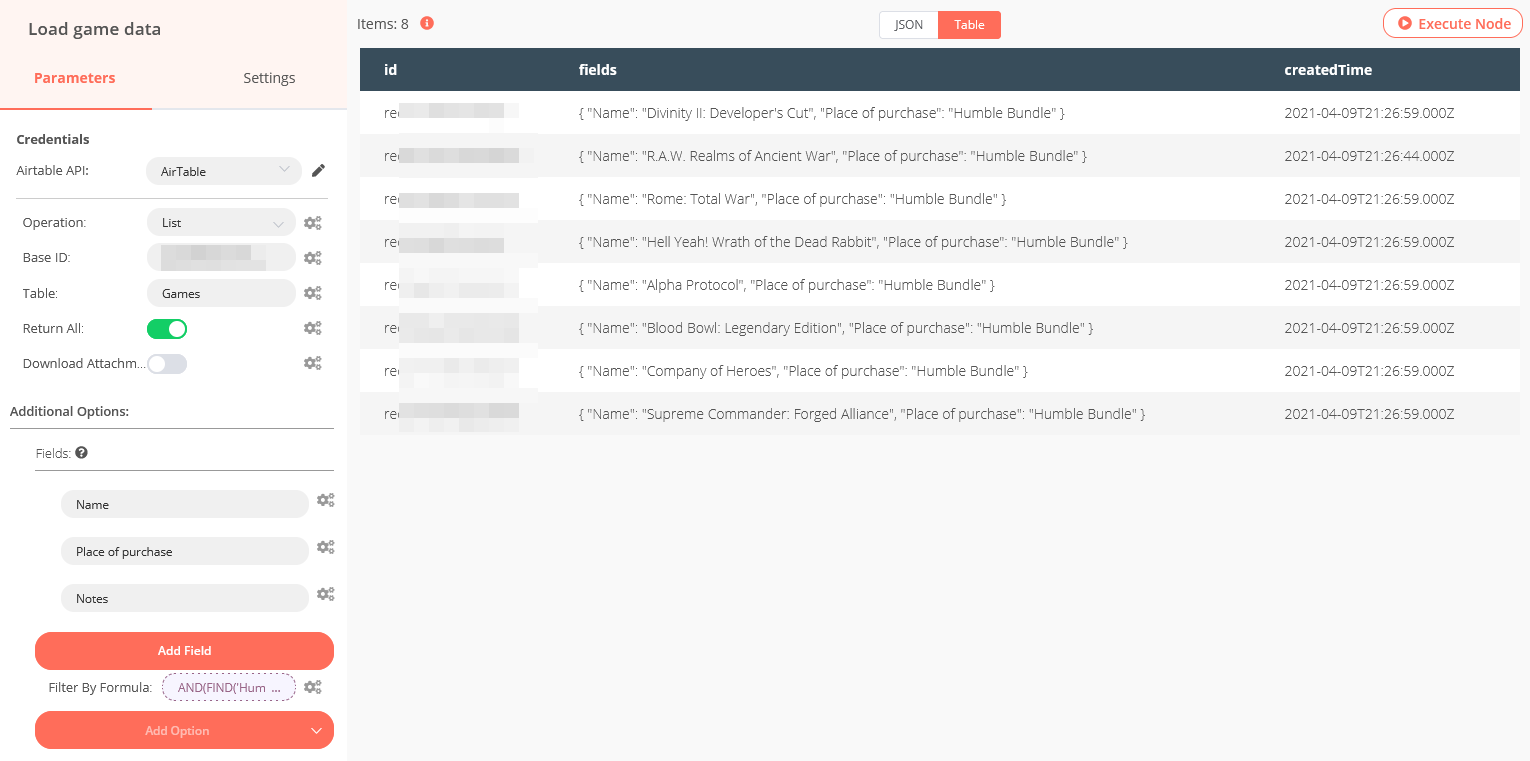

Creating the Credentials is trivial. The Load step is also simple - this is also where you can write your filtering formula to get just the right records.

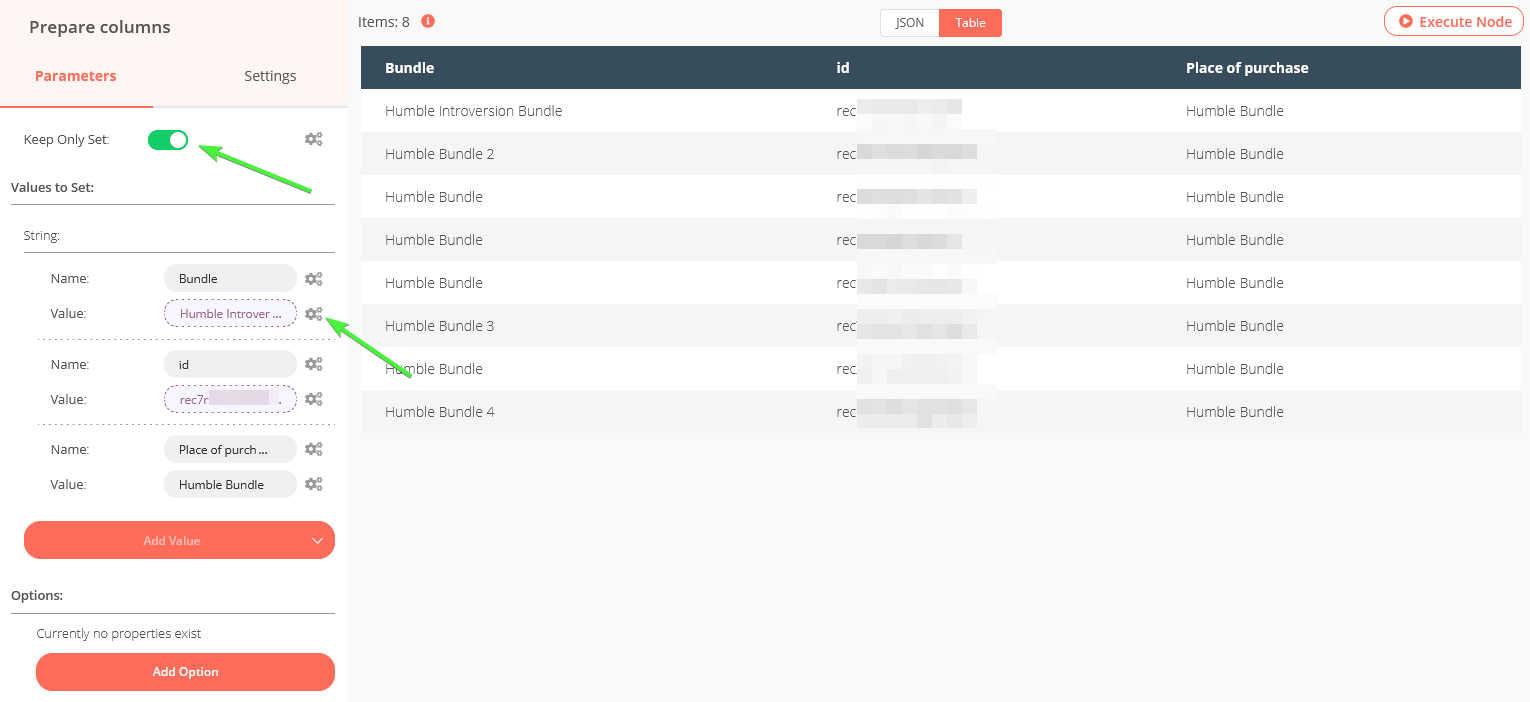

The only gotcha here was that the documentation of the Update step doesn't really say how to set the fields to be updated. After unsuccessfully fiddling with expressions, I've found the solution is quite different: First, prepare and name the fields you want to update - This is what the "Prepare columns" (type Set) action is for. Then send them in a separate step.

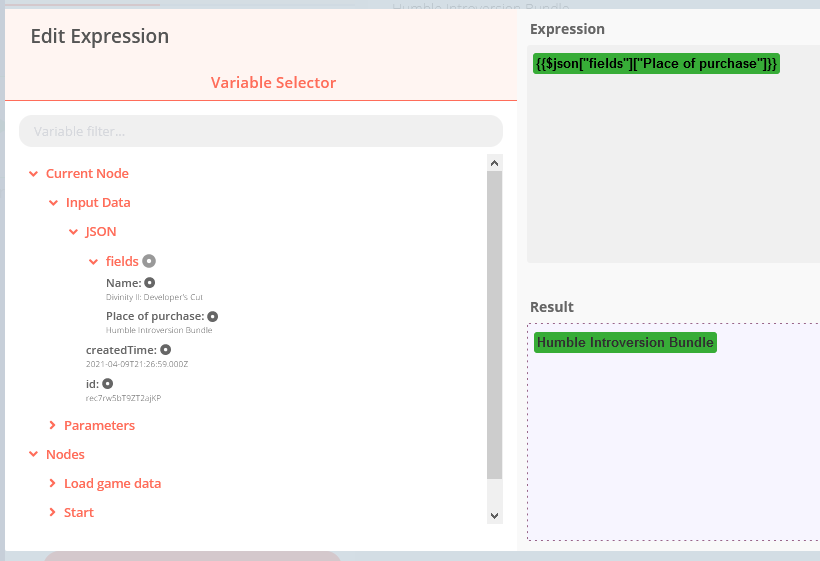

What I actually want to do is: I want to take the value from the "Place of purchase" field, copy it over to "Bundle", and replace "Place of purchase" with a fixed value. So I add the fields and their values:

To specify the value of Bundle should be taken from a different field, click on the Parameter Options (with the gear icon). An expression editor pops up:

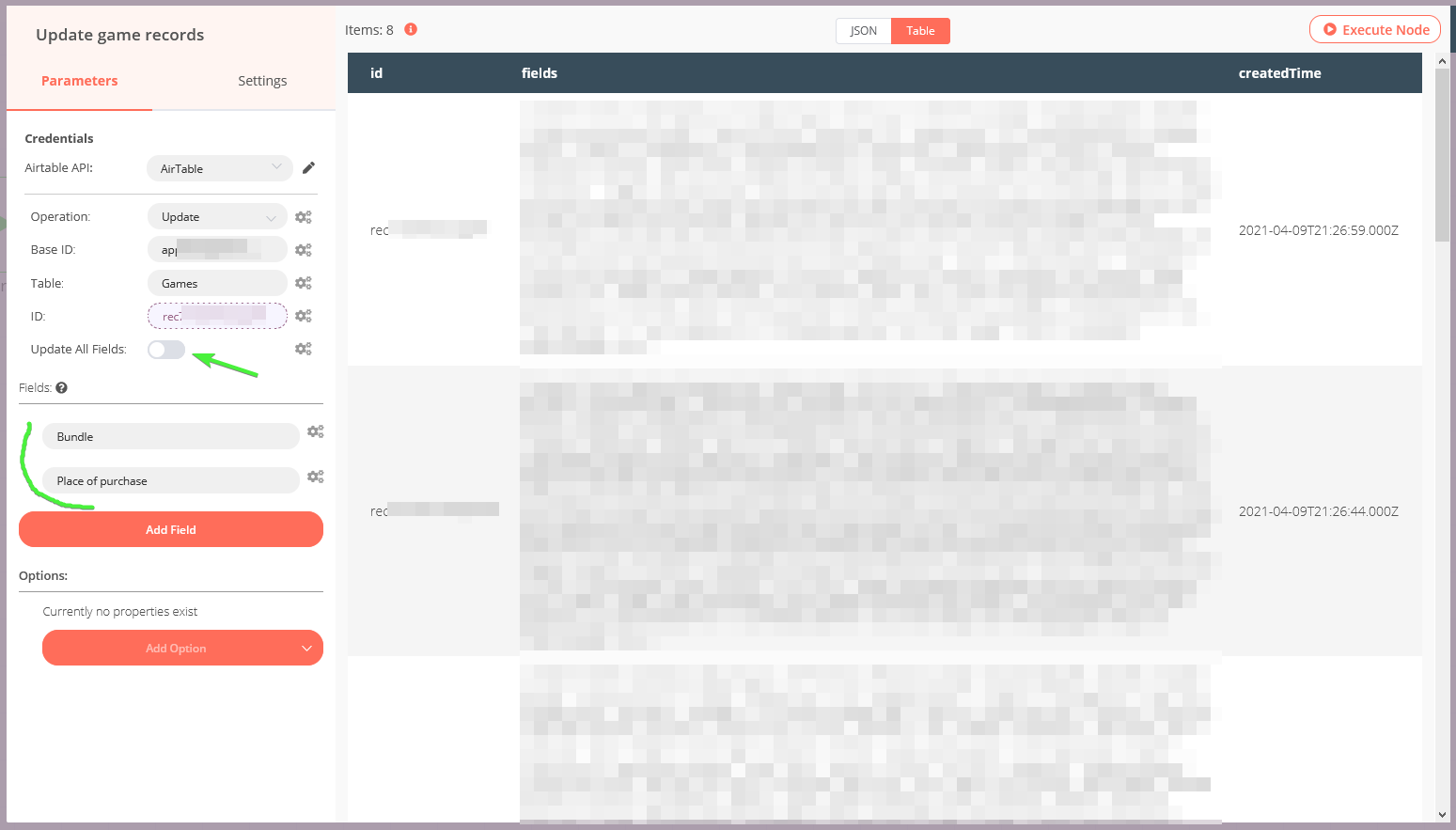

Now that the data is prepared, we only need to configure the update step. Turn off "Update All Fields" to avoid deleting other fields by accident.

The trick here was to prepare the fields with the correct names and values (in the step before) and now just specify their names in the Fields section as indicated.

Each Field will be taken from the input event, and its value used to update the corresponding column in Airtable. All we need to do now is just hit "Execute workflow".

I like how straightforward this all was, and will definitely be using Natan for one-off batch processing in the future. I've never used it to work with Airtable before, but figuring the entire process out from scratch still took much less time than writing this blog post. (😭)

Comments ()